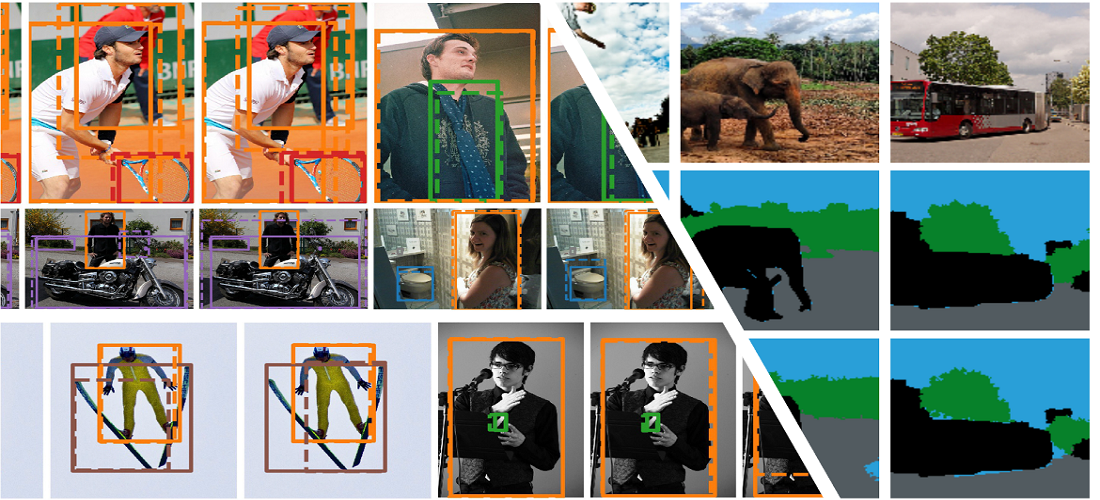

Advanced Visual Perception

Fundamental research in scene understanding, 3D reconstruction, and robust detection. We develop algorithms that can handle complex lighting, occlusions, and dynamic environments.

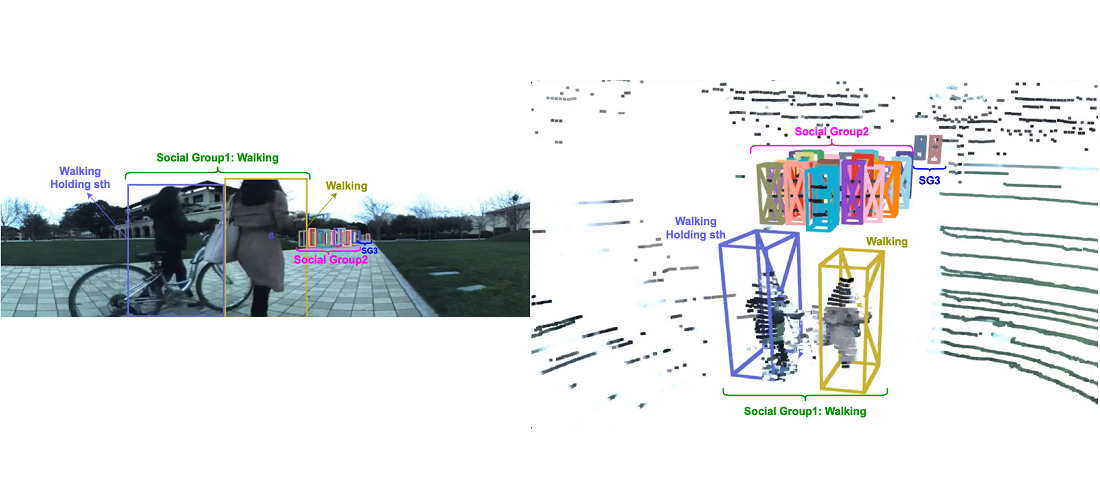

Forecasting & Behavior

Predicting human behavior and multi-modal trajectory forecasting. We focus on social and physical constraints to understand how humans move and interact in crowded spaces.

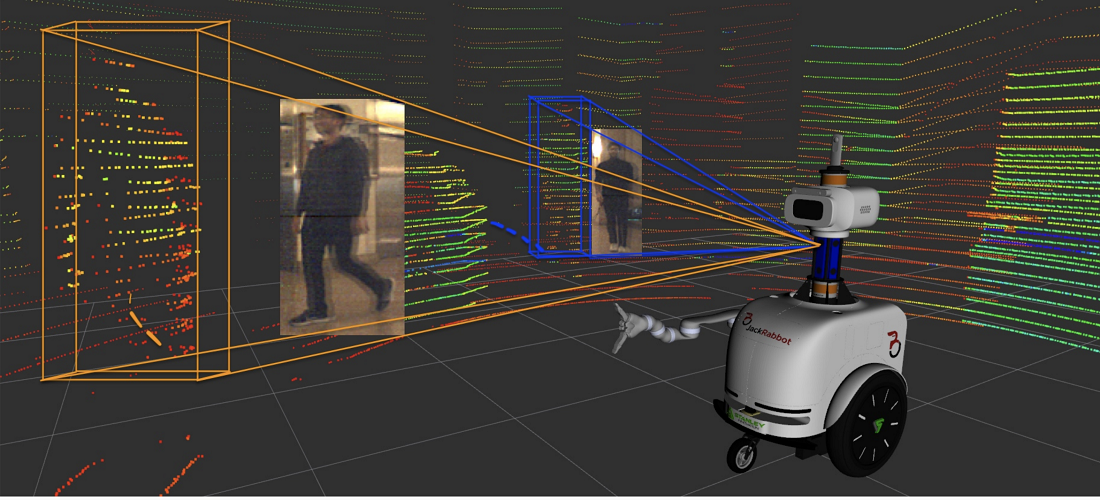

Embodied Intelligence & Navigation

Developing robotic systems capable of autonomous navigation and task planning. Our research integrates perception directly into the control loop for intelligent interaction.